Programmatic SEO in 2026: Quality-First Scaling Without the Penalty

Template-based programmatic SEO is dead. Here's the AI + human hybrid model that scales to hundreds of thousands of pages without triggering thin-content penalties.

Programmatic SEO has had a strange decade. In 2018, generating thousands of templated pages from a database was an exotic growth tactic used by a handful of marketplaces. By 2022, it had become a default playbook for SaaS, directories, and travel sites. In 2024, the helpful content update gutted thousands of templated programmatic operations overnight. And in 2026, after the March core update, the cleanup is finally finishing — what survives is a different model entirely.

The version of programmatic SEO that works in 2026 looks almost nothing like the template-and-spin approach that dominated the previous cycle. Near-duplicate penalties, thin content classifiers, and a vastly more sensitive helpful content evaluation have ended the era of variable-substitution pages. Sites that tried to keep running the old playbook through the March update lost 40 to 90% of their indexed pages and most of their organic traffic. The teams that succeeded built something fundamentally different: AI-assisted, human-reviewed, semantically rich pages that happen to be produced at scale.

This guide explains what programmatic SEO actually is in 2026, why the previous model collapsed, what the AI + human hybrid model looks like in practice, and how to layer in the generative engine optimization signals that determine whether programmatic pages also earn citations from AI engines.

What Programmatic SEO Means in 2026

Programmatic SEO is the practice of generating many landing pages from structured data — locations, products, comparisons, use cases, integrations — using a defined template and content production process. The underlying logic has not changed: there is a long tail of high-intent queries that justify dedicated landing pages, and producing those pages individually does not scale, but the right system can produce them at the rate the opportunity demands.

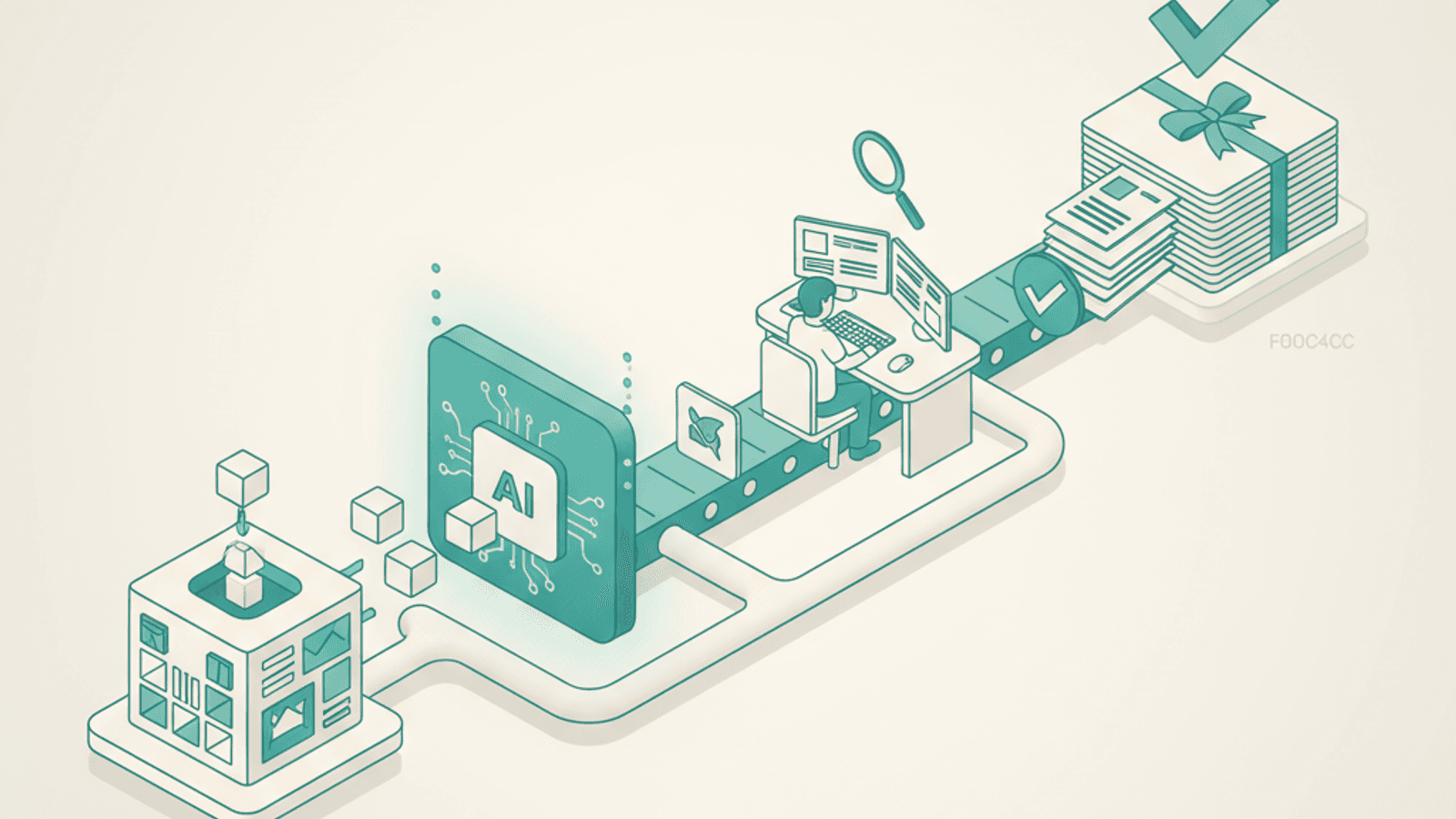

What has changed is the definition of "the right system." In 2018, the right system was a template, a data source, and a rendering engine. In 2026, the right system is a data source, an AI generation layer, a human review and enrichment step, and an ongoing quality monitoring loop. The production rate is similar. The output is qualitatively different.

The reason the model evolved is not philosophical. It is empirical: Google's classifiers now distinguish between pages that exist to answer a specific query well and pages that exist to capture search volume. A page about "best CRM for Philippine accounting firms" with original analysis, real comparison data, and verified expert review is a different artifact, to Google's evaluation systems, than a page generated by templating "best [category] for [vertical] in [country]" across ten thousand combinations.

Why Template-Based Programmatic SEO Died

The collapse of template-based programmatic SEO happened in three phases.

The first phase was the helpful content update in 2024, which began systematically downranking content that read as written-for-search rather than written-for-readers. Sites that had built sprawling programmatic operations on near-duplicate templates began to see thousands of pages lose visibility. Many recovered partially in subsequent updates by editing templates and adding variation.

The second phase was the March 2025 core update, which sharpened the classifier substantially. Pages that survived the helpful content update because they had slight variation now failed the deeper quality check. Google's evaluation increasingly looked not at whether two pages were identical but at whether each individual page actually delivered value beyond what a reader could find from a higher-quality source.

The third phase was the March 2026 core update, which essentially completed the transition. The shift toward authoritative, brand-owned, and government domains — and away from comparison aggregators and content built primarily for search visibility — left almost no surviving population of pure-template programmatic operations.

What remained, and what now grows, is the hybrid model. Sites running it are publishing programmatic pages that are individually defensible: each page tells a reader something specific, draws on real data, demonstrates evaluable expertise, and would not be missed if removed. The volume is similar to old-style programmatic. The quality threshold is enforced page by page.

The AI + Human Hybrid Model

The hybrid model that works in 2026 has a consistent structure across the operations that have succeeded.

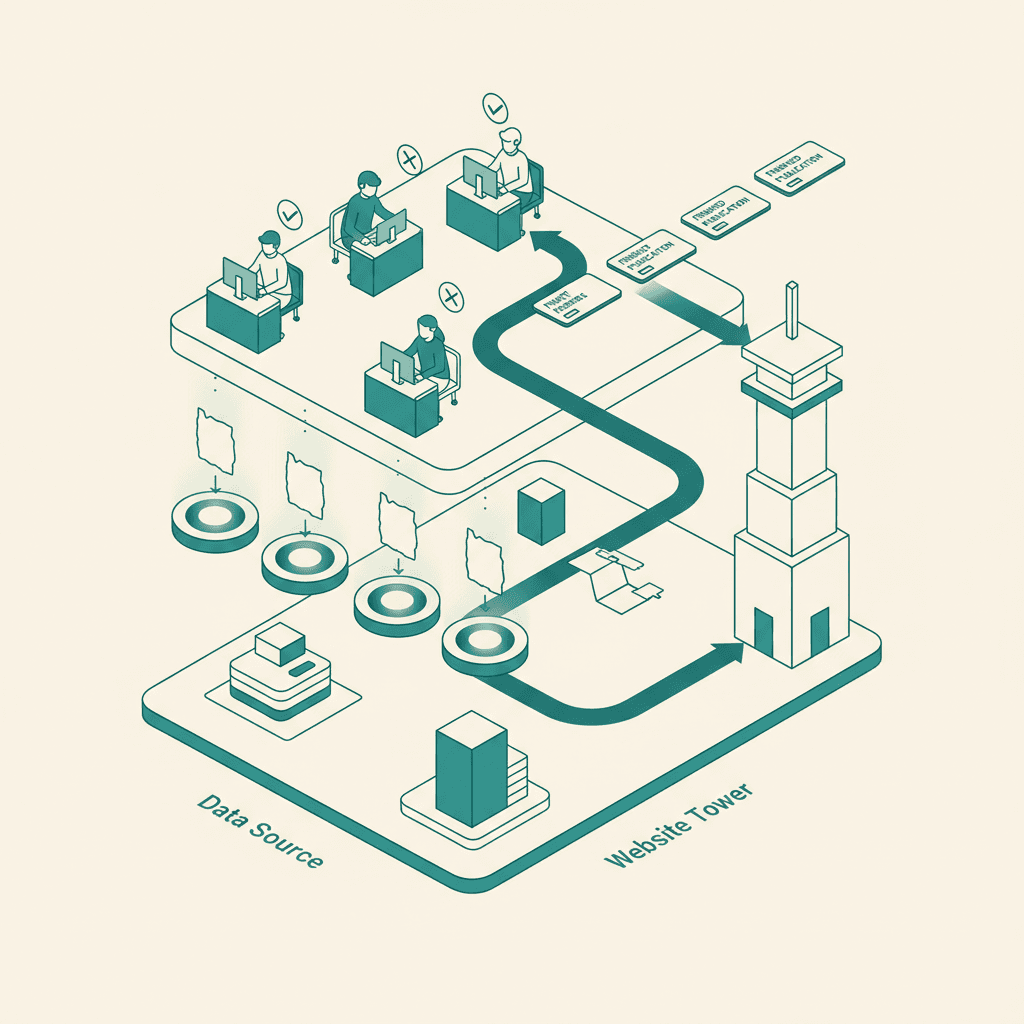

A data layer provides the structured inputs. For a directory site this might be business listings; for a SaaS, integration combinations; for a travel site, destination + activity pairs. The data layer is curated for quality — incomplete or low-value entries are excluded, not papered over.

An AI generation layer produces initial drafts for each page. The prompts are detailed and topic-specific, with explicit instructions about what kinds of statements need to be grounded in data, what kinds of claims are off-limits without verification, and how to flag uncertain content for human review. The best operations use multiple AI passes per page: one for primary content, one for examples and specifics, one for editing and tightening.

A human review and enrichment step is non-negotiable. A reviewer with subject-matter knowledge reads each draft, corrects factual errors, adds original insight that AI cannot generate on its own, ensures the page actually answers the query, and applies the editorial voice that distinguishes the brand. This is where the page transitions from "AI-generated content" to "human-published content that AI helped draft." Google's evaluation systems do not require human-only writing — they require human accountability. The hybrid model provides exactly that.

A quality monitoring loop catches drift over time. Pages are sampled and re-reviewed quarterly. Performance data — bounce rate, dwell time, query satisfaction signals — flags pages that need attention. Templates evolve based on what works and what does not. The whole system is treated as a living content operation, not a one-time generation event.

The crucial change is the cost equation. Pure-template programmatic SEO was cheap because the marginal cost of an additional page was near zero. The hybrid model is more expensive — there is a real human review cost per page — but the per-page traffic and conversion outcomes are several times higher, and the page survives algorithm updates rather than getting wiped out by them.

Identifying a Real Programmatic Opportunity

Not every business has a programmatic SEO opportunity, and many of the businesses that think they do are wrong. The honest test has three parts.

There must be a long tail of high-intent queries that share a structural pattern. "Best CRM for [industry]" works. "What is [topic]" does not — generic informational queries are better served by a single high-quality pillar page than by a programmatic family.

There must be real data behind each page. A directory of Philippine restaurants needs actual restaurants, with hours, menus, addresses, and reviews. A comparison page for SaaS integrations needs the actual integration documentation, screenshots, and use case patterns. Without underlying data, programmatic pages have nothing to differentiate themselves and fail the quality test.

The expected per-page traffic must justify the per-page production cost. A page that will generate 5 visitors a month does not justify even 20 minutes of human review. Programmatic SEO works when the long tail is genuinely valuable in aggregate, not when it is being used to inflate page count for its own sake.

When all three conditions are met, the upside can be substantial. Recent case studies of well-executed programmatic operations published in 2026 describe sites scaling from low five-figure monthly visitors to 200,000 to 300,000 within twelve months, with conversion rates that hold up because each page is genuinely useful to the visitor it captures.

Architecture That Scales Without Penalty

The architectural decisions that determine whether a programmatic operation can scale safely fall into a small set of categories.

URL structure should be flat, clean, and pattern-consistent — the same principles that apply to SEO-friendly URLs generally, applied across thousands of pages with discipline. Avoid noise parameters, session IDs, or template artifacts in the URL. Each URL should be readable and stable.

Internal linking should follow the topic cluster pattern — programmatic pages link to and from a smaller number of pillar pages that establish topical authority for the cluster. This concentrates link equity into the hub pages that anchor the cluster, then distributes it through contextual links to the long-tail leaves.

Indexation control is critical at scale. Many programmatic operations fail because they ship thousands of pages that should not be indexed — incomplete pages, near-duplicate edge cases, low-value combinations. A working setup uses thoughtful canonical tags, robust noindex rules for pages that fall below a quality threshold, and progressive indexation rather than dumping the entire library into Google at once.

Page templates should produce variation, not duplication. Two pages in the same family should share structural elements (heading hierarchy, FAQ section, comparison table) but differ substantively in content. A page that differs from its sibling only in the variable values is exactly what the thin content classifier is built to catch.

Layering GEO and AEO Signals into Programmatic Pages

The opportunity that distinguishes 2026 programmatic SEO from earlier eras is that the same pages can be optimized for both Google rankings and AI engine citations. The signals overlap substantially, and adding the GEO and AEO layer is incremental work rather than a separate project.

The structural elements that AI engines look for — clear headings, FAQ blocks, structured data, semantic context, and identifiable authorship — are exactly the elements that make programmatic pages defensible against thin content classifiers. A page with proper schema, an FAQ section with structured data, a named author byline, and substantive content is more likely to rank in Google and more likely to be cited by ChatGPT, Perplexity, and Claude.

For programmatic operations specifically, the additional steps are mostly about consistency. Apply the same schema pattern across the entire page family. Use a small set of named authors with proper author pages, distributed across the library. Build a structured FAQ section on each page that genuinely answers buyer questions. Include enough context that an AI engine extracting an answer from the page can do so confidently.

This connects programmatic SEO directly to broader AI-powered SEO and SEO automation work. The systems that produce the pages can also produce the schema, the author attribution, and the FAQ structures. Done correctly, the operation produces pages optimized for both surfaces from a single production pipeline.

A Starter Framework

For teams new to programmatic SEO in 2026, the working sequence is consistent across successful operations.

- Validate the long tail. Use AI keyword research tools to confirm that the queries you want to capture have meaningful aggregate volume and tolerable competition. If the long tail is too thin or too competitive, programmatic SEO is the wrong tool.

- Build a small prototype. Start with 100 to 500 pages, not 10,000. Validate that the pages actually rank, actually convert, and actually survive a few weeks of crawl evaluation. Many ideas that look great at the template stage fail the prototype test.

- Establish the AI generation prompt library. Iterate on the prompts until the unreviewed AI output is at least 70% of the way to publishable. The closer the AI output is to publishable, the more sustainable the per-page review cost.

- Build the human review workflow. This is the part most teams underinvest in. A reviewer needs domain knowledge, editorial judgment, and tooling that lets them edit efficiently. Without a real workflow, the operation either skips review (and dies in the next update) or makes review so slow that scale is impossible.

- Ship in batches with monitoring. Release pages progressively, monitor indexation and rankings, and let the early signal inform the next batch. The teams that ship 50,000 pages at once almost always create more problems than they solve.

The compounding effect over twelve months is what makes the model worth the work. A programmatic operation that publishes 100 reviewed pages a month accumulates to over a thousand high-quality pages in a year, supporting an organic traffic base that can sustainably contribute to a comprehensive SEO strategy rather than fighting against it.

When Programmatic Is the Wrong Answer

Some kinds of content do not belong in a programmatic operation, no matter how compelling the volume looks.

Content that requires deep expertise to be useful — medical advice, financial planning, legal guidance — is rarely a good fit. The cost of human review per page becomes high enough that the volume advantage disappears, and the consequences of errors are too significant to accept the risk of automated drift.

Content where the user wants a single authoritative answer, not a comparison or directory, is better served by a pillar page. "What is technical SEO" works as a single high-quality page; it does not benefit from being programmatically split into 50 sub-pages.

Content that competes against entrenched authoritative sources — Wikipedia for definitional queries, government sites for regulatory information — is unlikely to win programmatic battles even with high-quality pages. The investment is better spent elsewhere.

The clearest signal that programmatic is the wrong answer is when the team cannot articulate what each individual page in the family tells a reader that they could not get from a better source elsewhere. If that question has no good answer, the pages will not survive even with hybrid production.

Frequently Asked Questions

Is programmatic SEO still allowed by Google in 2026?+

Yes, when done with real per-page quality. Google has been explicit that scale itself is not the problem — the problem is content that exists to capture search volume without delivering value. Programmatic operations that produce substantive, differentiated pages with proper human accountability remain compatible with Google's quality guidelines.

How much does it cost to run programmatic SEO with the hybrid model?+

The per-page cost depends heavily on the topic and the depth of review required, but most operations land in the $5 to $30 per page range for a published, reviewed page. The volume that makes sense depends on the per-page traffic and revenue you expect, but most successful operations target several hundred to several thousand pages in the first year rather than tens of thousands.

Can AI fully write programmatic pages without human review?+

No, not in a way that survives current ranking systems. Unreviewed AI content fails both the thin content classifier and the helpful content evaluation at meaningful rates, and even the pages that survive initially tend to lose visibility within a few months. The human review step is the difference between a programmatic operation that compounds and one that collapses.

What is the relationship between programmatic SEO and AI search visibility?+

A well-built programmatic page is more likely to be cited by ChatGPT, Perplexity, and Claude than equivalent ad-hoc content, because the structural elements that make programmatic pages defensible (clear headings, FAQ blocks, schema, consistent author attribution) are exactly the signals AI engines weight when selecting citation sources. Programmatic SEO and AI search optimization reinforce each other when both are done well.

How long before a new programmatic operation starts generating meaningful traffic?+

Most operations see initial indexation and early rankings within 4 to 8 weeks of shipping the first batch of pages. Meaningful traffic typically arrives in months 4 to 9 as more pages mature, internal linking compounds, and the cluster accumulates topical authority. Operations that look thin on traffic at the 60-day mark often look very different at the 180-day mark.